Section 5 of the Digital Personal Data Protection Act demands clear, informed consent. But when does transparency cross the line into noise?

India’s Digital Personal Data Protection Act, 2023 (DPDPA) sets a clear goal: make sure users know what’s happening with their personal data before they agree to it. On paper, that sounds simple. In practice, it raises a question that legal teams, product managers, and platform designers are quietly wrestling with across boardrooms right now.

If a user is active across ten services inside the same super-app — payments, insurance, lending, loyalty points, AI recommendations — does the platform have to show them the full statutory privacy notice each time they interact with a new feature?

A rigid reading of the law suggests yes. Common sense suggests that would be a disaster for users and compliance alike.

What Section 5 actually says

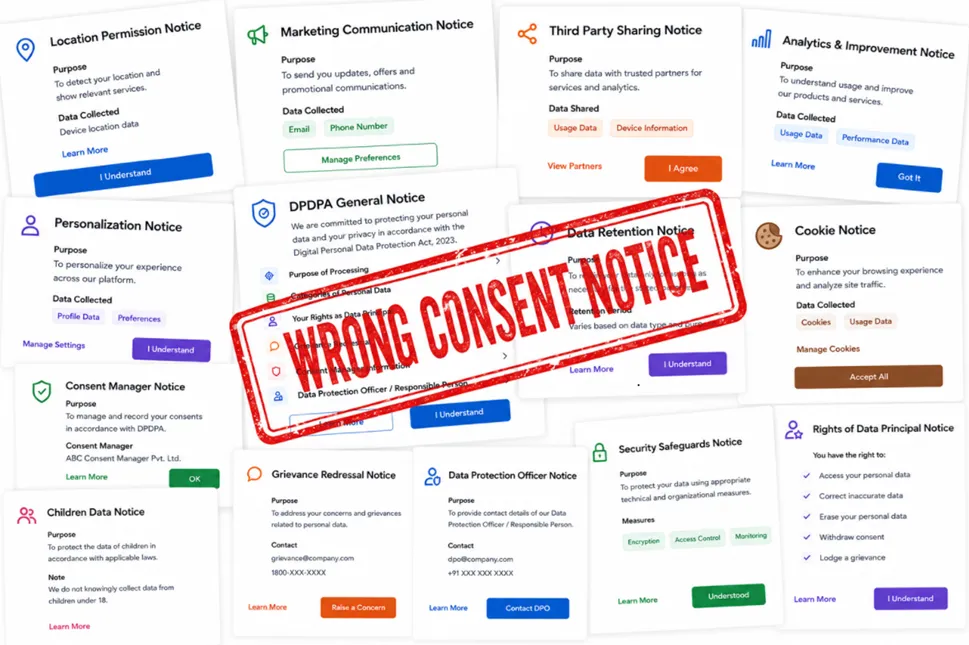

Section 5(1) of the DPDPA states that every request for consent must be accompanied — or preceded — by a notice. That notice must tell the Data Principal:

What personal data will be collected. What it will be used for. How they can exercise their rights. How they can raise a complaint if something goes wrong.

The draft DPDP Rules add more detail. Notices should be clear, standalone, and easy to understand. They should itemize both the data being collected and the specific purposes for which it will be used. Section 8(9) separately requires organizations to publish the contact details of their Data Protection Officer (DPO). Section 8(10) requires a working grievance redressal mechanism.

The legal framework, in short, is built around one idea: transparency must come before consent.

What the law does not say is whether the full statutory notice needs to appear identically every single time consent is sought within the same digital ecosystem. That silence is where the real debate begins.

The problem that emerges in practice

Consider a realistic scenario. A user downloads a financial services super-app. At onboarding, they read a detailed privacy notice — they understand what data is being collected, why, and what their rights are. They click accept.

Two days later, they activate the embedded lending module. Should they see the full notice again? What about the insurance tab? The personalized offers feature? The AI spending coach?

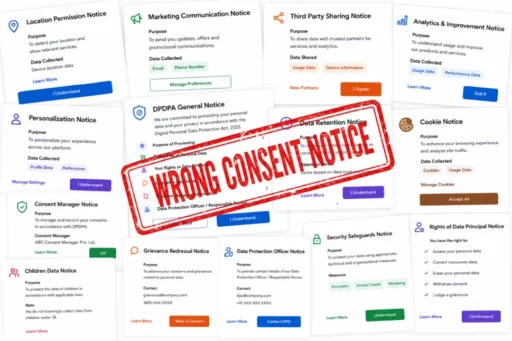

If the answer is “yes, every time,” the user journey starts to look like this:

THE NOTICE LOOP — WHAT OVER-COMPLIANCE LOOKS LIKE

At some point — and research suggests it happens quickly — users stop reading. They click through on reflex. The notice becomes a speed bump, not a safeguard. And when that happens, the law’s core objective collapses. Consent stops being informed and becomes automatic.

The goal of privacy law is meaningful awareness — not repetitive disclosure rituals. There is a difference between transparency and notification overload masquerading as transparency.

This is not a new problem

Cookie banner fatigue is well-documented. Studies on GDPR compliance in Europe found that users who were shown repeated consent popups were significantly more likely to click “accept all” without reading — the exact opposite of what the regulation intended. Consent habituation, decision exhaustion, and auto-approval behavior are measurable phenomena.

India’s DPDPA was designed to be simpler and more user-centric than GDPR. But it faces the same structural tension: the more you push transparency, the higher the risk that users tune out entirely.

The law’s designers were aware of this. The Act emphasizes that notices should be clear and understandable — not merely technically present. That framing suggests the spirit of the law cares about whether users actually absorb the information, not just whether a disclosure was technically displayed.

A more workable interpretation

Most legal experts and privacy professionals working in this space believe regulators are unlikely to demand mechanical repetition of the full statutory notice for every interaction within a known ecosystem. The more probable direction of guidance and enforcement is toward a principle-based model — one where the objective is real, meaningful awareness at key decision points.

This interpretation would support several design patterns that are already common in mature privacy systems globally:

Full notice at entry

Complete disclosure at onboarding, account creation, and the first time a materially new service is used.

Contextual prompts mid-journey

Shorter, purpose-specific prompts for routine interactions within the same ecosystem.

Fresh notice on material change

A new full notice triggers whenever genuinely new practices emerge.

This layered approach respects both the letter and the intent of the DPDPA. The user is fully informed at the start. They receive relevant, bite-sized prompts when context shifts. And they get a fresh, comprehensive notice when the ground truly changes beneath them.

The AI ecosystem makes this harder

The notice-fatigue problem becomes much more complex in the context of AI agents, ambient computing, and automated decision systems. Future platforms may process dozens of micro-authorizations per second — personalized recommendations, contextual offers, behavioral inferences — with no obvious human decision point in between.

Static notice models were designed for a world where a human is sitting at a screen, reading text, and clicking buttons. That world is already shifting. As AI agents increasingly act on behalf of users in real time, the traditional notice-and-consent model may need to evolve substantially.

Some researchers and technologists have started exploring machine-readable consent manifests — structured data files that an AI agent can interpret and act on, encoding a user’s privacy preferences without requiring them to read a notice every time. Others point to delegated consent frameworks, where a user sets preferences once and an authorized intermediary handles subsequent micro-decisions within agreed boundaries.

These models are not yet part of the DPDPA’s current framework. But they may need to be as the technology landscape evolves and the rules are refined.

What organizations should do now

While the final rules are still being shaped, organizations operating under the DPDPA should focus on building notice architectures that are layered, proportional, and genuinely user-centered — not just technically compliant.

That means investing in onboarding notices that are actually clear, not just legally dense. It means designing contextual prompts that feel helpful rather than bureaucratic. It means building “learn more” pathways that let curious users go deeper without forcing indifferent users through walls of text.

And critically, it means maintaining detailed internal records of what was disclosed, when, and in what form — so that when enforcement questions arise, the organization can demonstrate genuine intent to inform, not just a checkbox exercise.

THE QUESTION THAT MATTERS

The DPDPA’s notice architecture is still evolving. But organizations that design for genuine understanding — rather than defensive repetition — are likely to be on the right side of wherever the law lands.

This article reflects the author’s interpretation of the DPDPA 2023 and draft Rules as available at time of writing. It does not constitute legal advice. Organizations should seek qualified legal counsel for compliance decisions.